Why Agentic AI requires Graph based Observability?

The industry is currently flooded with vendors claiming to have “solved” AI observability but when you view them through the lens of enterprise architecture, their solutions fall apart.

Recently, I have received a call from an old client about their AI Agent making decisions which they cannot track even when they have invested in Observability tooling.

They had deployed a sophisticated procurement agent to manage their raw materials inventory. The agent was designed to read the Bill of Materials (BOM) for incoming orders and automatically interact with the ERP to ensure supply.

It had been running smoothly for weeks. Then, one night, the agent confidently issued a purchase order for 5,000 gallons of highly reactive industrial solvent that the company absolutely did not need. It was a $200,000 mistake executed in milliseconds.

The engineering team did what they were trained to do, they opened their AI Observability dashboards to debug the failure.

They were using one of the industry-standard LLMOps platforms (think LangSmith or Arize AI). They opened the specific trace, expecting to see a giant red error box.

Instead, the dashboard showed a 100% success rate.

The token usage was optimal. The latency was fine. The visual Directed Acyclic Graph (DAG) in the UI showed a perfectly clean execution path: the agent checked the ERP, saw a shortage, queried the vector DB for an alternative, found the solvent, and successfully executed the Create_Purchase_Order tool.

According to modern AI observability tools, the agent performed flawlessly. The JSON parsed correctly. The APIs returned 200 OK.

The tools were completely blind to the fact that the agent had just committed a catastrophic logical error. This is the “Silent Success” problem of Agentic AI, and it exposes a massive architectural flaw in how the industry approaches observability.

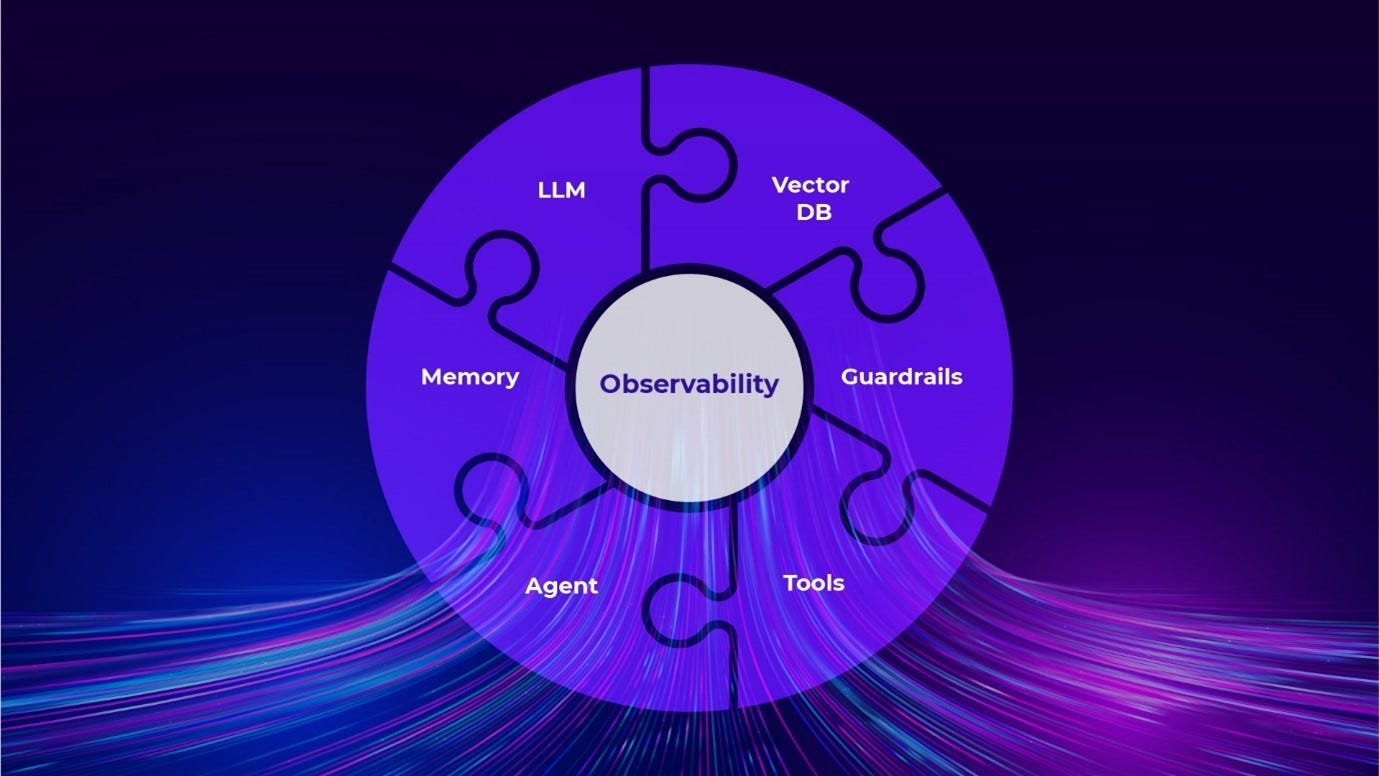

The AI Observability

The industry is currently flooded with vendors claiming to have “solved” AI observability. When you view them through the lens of enterprise architecture, their solutions fall apart because they suffer from a severe semantic blindspot.

The Giants (Datadog, Dynatrace, New Relic etc)

To be fair, these platforms have evolved. They boast “Interactive Dependency Graphs” and “Entity Maps.” But look at what these graphs actually map, they map infrastructure and service flow, not reasoning.

They are built for deterministic microservices. They treat an LLM call exactly like a SQL query.

A 200 OK HTTP status code between your agent container and the OpenAI endpoint means nothing if the agent just authorized a catastrophic purchase order.

They map the servers, but they are blind to the thought process.

The AI Focused (Arize AI, LangSmith, Kore.ai etc)

These platforms specifically target AI, and their marketing heavily touts “agentic tracing” and “graph visualization.” They do a great job of mapping a single agent’s trajectory.

But under the hood, they are still fundamentally OpenTelemetry (OTel) log stores. They suffer from Trace Isolation. They capture the agent’s actions perfectly, but that trace exists in a vacuum.

The tool does not know your business logic.

If an agent skips a mandatory safety check, LangSmith simply shows you a UI graph where the “Safety Check” node isn’t there.

Because the tool doesn’t know the rules of your enterprise, it assumes the agent’s path was correct. They treat the graph as a UI visualization of an ephemeral log, not as a computable mathematical structure tied to reality.

The Solution

If an agent’s execution is a branching decision tree, the observability layer must be a native graph database (like Neo4j) that already holds your company’s business logic.

I have implemented a separate agent’s execution state directly into Neo4j, where the company’s manufacturing ontology (their Enterprise Knowledge Graph) already lived.

We ran the agent in a shadow environment, and a week later, it attempted the exact same hallucination.

This time, we didn’t look at a UI visualization of a single trace. We mathematically queried the agent’s behavior against the laws of the business.

Because both the Agent Trace and the Enterprise Ontology lived in the same database, we could write a Cypher query to perform an “Ontological Join”

// Find any execution trace where the agent ordered a hazardous material

// WITHOUT executing a corresponding safety check tool.

MATCH (trace:AgentSession)-[:TOOL_CALL]->(po:PurchaseOrder)-[:TARGETS_ITEM]->(item:Material)

MATCH (item)-[:HAS_PROPERTY]->(prop:HazardLevel {value: 'High'})

WHERE NOT (trace)-[:TOOL_CALL]->(:SafetyMatrixCheck)

RETURN trace.id, item.nameThe Graph revealed the semantic missing edge. The agent had queried the vector database for an alternative chemical. It found “Solvent Y-200”. In the company’s ontology, Y-200 is linked to a [HazardLevel: High] node, which strictly requires a [:SAFETY_CLEARANCE] edge.

The agent had bypassed the safety check tool entirely.

LangSmith couldn’t catch this because LangSmith doesn’t know what “Solvent Y-200” is. It only knows what the LLM typed.

Neo4j caught it instantly because it cross-referenced the agent’s ephemeral trace against the physical reality of the business.

What else is needed?

Once you have Native Graph Evals in place, you stop guessing and start engineering. Seeing the failure is step one. Preventing it requires expanding your architecture beyond trusting the LLM.

Here is what else you must implement when deploying agents to production

Deterministic Tool Gateways

An LLM should never speak directly to an ERP or a production database.

You must build a middleware gateway between the agent’s output and the actual API execution. Using our Neo4j setup, we built a gateway that intercepts the Create_Purchase_Order tool call, queries the graph to ensure the [Safety_Check] node exists in the current session trace, and blocks the API if the graph topology is invalid.

State-Bound Prompts

Do not give an agent all 15 of its tools in the initial system prompt. That is begging for hallucinations.

Use the graph state to dynamically inject only the tools that are valid for that specific moment.

If the agent has not successfully completed the Inventory_Check node, the Create_Purchase_Order tool should literally not exist in its context window.

Macro-Topological Analysis

Stop looking at crashes one by one.

With a native graph database, you can run PageRank or Cycle Detection algorithms across millions of historical traces simultaneously.

You can mathematically prove that 90% of your token-burning infinite loops only happen when the agent interacts with Tool A immediately after failing Tool B.

Conclusion

If you deploy autonomous agents to production using isolated trace stores, you are not deploying software. You are deploying a dangerous, expensive black box.

Native graph observability shifts AI from “magic” back to determinism. By interrogating the topology of the agent’s reasoning against the ontology of your business, you stop relying on “Silent Successes.”

You patch the system prompt, build a gateway, or constrain the toolset precisely at the node where the graph broke.

Stop treating agents like magic functions. Treat them like autonomous state machines traversing a graph, and build the infrastructure to observe them accordingly.

If you want to see exactly how to build this architecture in production, I am doing a deep dive on this exact topic at Neo4j’s NODES AI.

Register for free and come see the graph in action.