Why Flat Logs cannot Debug AI Agents?

The $240,000 order was not a failure of the language model, prompt engineering or not even the multi agent architecture itself. It was a catastrophic failure of enterprise observability.

A few ago, an incident at one my manufacturing client cost a quarter of a million dollars.

They have deployed 7 AI Agents, they have tested thoroughly before pushing it to production. Their explicit job was to manage the bill of materials and keep inventory aligned and place orders automatically. There was no human in the loop. That was the entire point of the architecture.

One Tuesday morning the automated procurement agent placed a purchase order for 500 gallons of industrial solvent. It ordered the completely wrong chemical type. The final cost included the wasted material and the specialized hazardous disposal fees and the massive production delays.

The total financial damage was $240,000.

The engineering team immediately pulled the production logs to find the bug. They exported 38,000 lines of flat JSON. They had every tool you would expect from a modern and well instrumented system. They had beautiful LangSmith traces. They had OpenTelemetry spans. They had deeply structured logging.

It took the team 90 hours to find the root cause. Five senior engineers spent three full days running grep commands through logs and manually diffing prompt versions. They desperately tried to reconstruct which piece of context from which specific agent led to this catastrophic financial decision.

The revelation hit them on day three. The problem was not an LLM hallucination. It was not a bad prompt design. It was not even a traditional software bug.

The problem was that Agent 2 passed stale context to Agent 5. Agent 5 then used that outdated context to inform a material decision which triggered Agent 7 to execute the final purchase order.

The observability tools could easily show what happened. They showed the exact purchase order. The tools could show when it happened by matching the timestamp in the logs. But the tools completely failed to show why it happened. They could not expose the causal chain of context propagation across multiple independent agents.

This is the story of why flat logs cannot debug graph problems. Every engineering team deploying multi agent systems is about to learn this lesson the hard way.

Flat logs and Graph Decisions

Let’s analyze why traditional observability breaks down the moment you introduce autonomous agents into a production environment.

If you build distributed systems you already know the standard playbook. You use structured logging for individual events. You use distributed tracing like OpenTelemetry for request flows. You use observability platforms like Datadog or LangSmith for visualization.

This playbook works beautifully for microservices. An HTTP request flows predictably through Service A then Service B then Service C. The trace is entirely linear. The causality is strictly sequential.

Agents do not think linearly. They branch when inventory falls below a threshold and they delegate tasks to specialized peers. They retry failed tool calls with modified context. They loop back to reevaluate decisions based on updated state.

The Raw Telemetry Comparison

Look at the standard flat log output you see in a terminal.

[09.18.32] agent=bom_reader action=fetch_spec file=spec_v2.json // Bad spec file

[09.22.47] agent=procurement action=query_supplier target=ABC_Corp

[09.23.41] agent=procurement action=place_order qty=500You can see exactly what happened but you cannot see why.

Now look at the graph reality represented as a linked structure.

(Context file="spec_v2.json" state="STALE")

-[PROVIDED_CONTEXT]-> (Decision action="Select Supplier")

-[TRIGGERED]-> (ToolCall action="place_order" qty=500 state="FLAGGED")Causality is completely visible.

Look closely at what each data structure physically records to see the exact difference.

When you look at the flat logs you see isolated events attached to timestamps. As humans reading a simple three line example we assume the first event caused the second event simply because they happened sequentially. However in a real production environment there might be thousands of other logs recorded between those two timestamps by dozens of different agents. The flat log provides absolutely no structural proof that the specific file fetched at 09.18 was the exact data used to place the order at 09.23. It only tells you when things happened. You are forced to guess the relationship based on chronological proximity.

Now look at the graph model. The graph does not rely on guessing based on time. It uses explicit structural edges to link data together. The edge labeled PROVIDED_CONTEXT is a literal database relationship tying the specific stale JSON file directly to the decision node. The edge labeled TRIGGERED physically links that exact decision to the final tool call.

Flat logs force you to guess causality based on time. The graph provides true causality because it explicitly records the relationships between inputs decisions and outputs as permanent physical links in the database. You do not have to guess what caused the bad order because the graph draws a direct line straight to the stale context file.

LangSmith gives you beautiful traces. But those traces are trees not graphs. They show parent child relationships indicating which agent called which tool. They do not show causality indicating which specific context payload influenced which downstream decision.

Quick check. If your agent made a bad decision right now could you instantly trace which exact context file influenced it. If your honest answer is that you would have to grep the logs and reconstruct the timeline manually then you have a severe graph problem.

Without causality tracing your investigation reality is brutal. You pull 38,000 lines of logs. You search for the order SKU. You find the decision that triggered it. You manually trace backwards to see which tool calls preceded the order. You diff the prompt versions to see if Agent 5 received the correct instructions. You hunt down the context sources to see where the specification came from. Eventually you discover that Agent 2 used a cached file from three weeks ago. You reconstruct this entire propagation path manually over 90 hours.

Agents make decisions in a complex graph structure. Observability tools built for linear sequences can never show you the why.

The solution (Thinking in Graph)

We evaluate this monitoring failure and reach a clear architectural mandate.

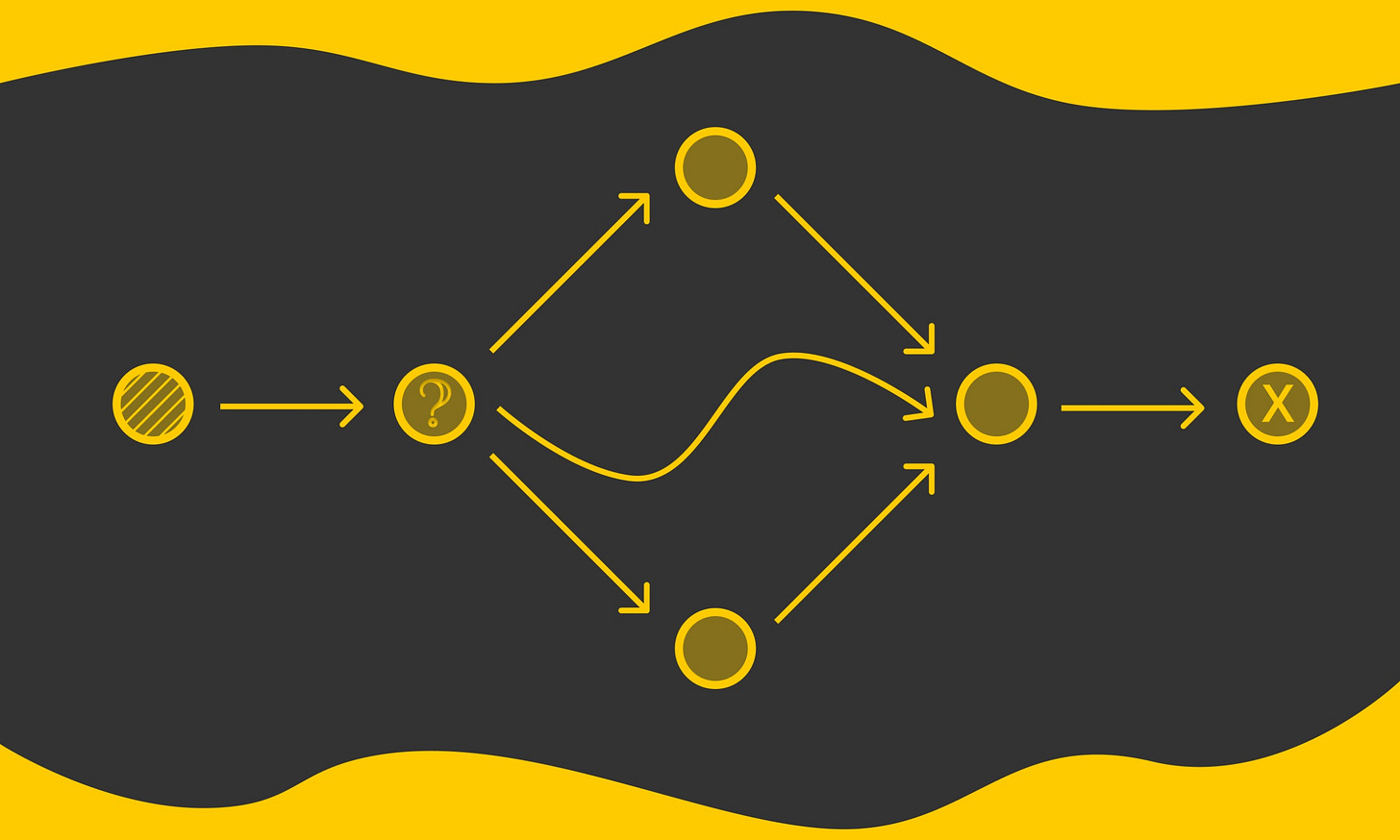

You must stop treating agent traces as chronological logs and must start treating them as graphs.

This requires a fundamental mental shift. Every agent decision is a node in a graph. Every handoff between agents is an edge connecting those nodes. Every piece of context is a property living on those nodes.

We need to define a strict data model to capture this reality.

Nodes

AgentRun containing id and agentName and model and startedAt

Decision containing id and reasoning and confidence and timestamp

ToolCall containing toolName and input and output and duration

Context containing source and contentHash and retrievedAt and stale status

Edges

MADE a decision

TRIGGERED a tool call

DELEGATED_TO other Agent (Node)

PROVIDED_CONTEXT to a Decision or Agent

Flow

AgentRun → MADE → Decision

Decision → TRIGGERED → ToolCall

Decision → DELEGATED_TO → AgentRun

Context PROVIDED_CONTEXT Decision

The Decision Graph

AgentRun(#BOM-7)

├─ Decision (Retrieve BOM)

│ └─ Context (spec_v2.json) ← STALE

│

├─ Decision (Select Supplier)

│ ├─ PROVIDED_CONTEXT ← Context (bad_spec_v2.json)

│ └─ TRIGGERED → ToolCall (query_supplier)

│

└─ Decision (Place Order) ← FLAGGED

└─ TRIGGERED → ToolCall (place_order qty 500)You need a database that stores decisions as they happen through streaming ingestion. You need a system that lets you query causality directly to show every decision influenced by a specific stale context. You need a platform that visualizes the decision graph natively and supports deep pattern matching. Neo4j handles all four of these requirements perfectly.

You would not debug a distributed microservice system with a basic tail command. Why are you debugging multi agent systems with grep.

Here is the ingestion pattern you need to implement. We use Cypher to write the trace data directly into Neo4j.

MERGE (run:AgentRun {id: $run_id})

CREATE (d:Decision)

SET d += $props

MERGE (run)-[:MADE]->(d)

WITH d

FOREACH (ctx IN $contexts |

MERGE (c:Context {source: ctx.source})

SET c += ctx

MERGE (c)-[:PROVIDED_CONTEXT]->(d)

)We make specific design decisions here. We use MERGE instead of CREATE for the runs and contexts so reingesting a trace does not duplicate data. We ingest at decision time rather than batching at the end of the run. We keep the tool output as raw JSON because Cypher can query nested JSON directly using dot notation.

When the incident occurred we did not grep the logs. We ran the following Cypher query to find the root cause.

MATCH

(run:AgentRun)-[:MADE]->(d:Decision)-[:TRIGGERED]->(tc:ToolCall)

WHERE tc.tool_name = 'place_order'

AND tc.output.quantity > 100

AND tc.output.verified = false

MATCH

(d)<-[:PROVIDED_CONTEXT]-(ctx:Context)

WHERE ctx.stale = true

RETURN

run.id, d.reasoning, ctx.source, tc.output

ORDER BY tc.timestampThis query immediately returned the exact run ID and the flawed reasoning and the exact stale context file that caused the purchase. We went from a massive production incident to a verified root cause in 40 minutes.

The problem was never that we could not log the decision. The problem was that we could not query the causality. Logs are great for recording what happened. Graphs are absolutely necessary for understanding why it happened.

Graph Evals Assertions as Cypher Queries

We evaluate how this changes our testing strategy. Traditional evaluations check basic outputs. They ask if the LLM called the right tool or if the output was valid JSON. This is essentially a basic unit test on a ToolCall node.

Graph evaluations completely change this paradigm. They check systemic causality. They ask if any stale context influenced a high value order. They ask if a flagged decision propagated to downstream agents.

Graph Eval Query Pattern

MATCH

(Context)-[:PROVIDED_CONTEXT]->(Decision)-[:TRIGGERED]->(ToolCall)

WHERE

Context.stale = true

AND ToolCall.tool_name =~ '.*order.*'Pattern matching makes assertions declarative rather than procedural.

Take this first example of a Stale Context Check. We want to catch any order where the context source was flagged as stale. We write an assertion as a graph traversal.

MATCH

(c:Context)-[:PROVIDED_CONTEXT]->(d:Decision)-[:TRIGGERED]->(tc:ToolCall)

WHERE c.stale = true

AND tc.tool_name =~ '.*order.*'

RETURN c.source, d.reasoning, tc.output.quantity

ORDER BY tc.output.quantity DESCThis single query catches any order placed based on outdated specifications or cached inventory data or old supplier information.

Take a second example of checking for Multi Agent Context Drift. We want to surface cross agent contamination where a bad decision infects the rest of the cluster.

MATCH path = (r1:AgentRun)-[:MADE*1..4]->(d:Decision)-[:DELEGATED_TO]->(r2:AgentRun)

WHERE ANY(n IN nodes(path) WHERE n.flagged = true)

RETURN r1.agent_name, r2.agent_name, length(path) AS chain_depthThis query catches the exact moment Agent A makes a flagged decision and delegates the flawed state to Agent B which then poisons Agent C. You see the entire contamination chain instantly.

How many of your evaluations check basic outputs versus checking deep causality. If you are only checking whether the agent called the right tool you are completely missing the systemic failure mode that costs a quarter of a million dollars.

Evaluations at the individual call level miss the system level failure mode. A correct tool call executed with the wrong context still produces a catastrophic outcome. Graph evaluations let you assert on context propagation instead of just verifying local decisions.

Before and After Timeline

We quantify exactly what changed when we shifted our observability architecture.

Before

Day 1 Pull massive logs and grep for the SKU

Day 2 Manually trace the context across seven agents

Day 3 Finally locate the root cause fileBefore we implemented graph evaluations our root cause time was 72 hours. We required five senior engineers on the incident. We had absolutely no visibility into context flow and relied entirely on manual reconstruction. We tracked prompt versions through pure guesswork by diffing files across agents. Our confidence in the final fix was incredibly low because we could not mathematically verify the propagation paths.

After

Hour 1 Run a single Cypher query and locate the root cause instantlyAfter we implemented graph evaluations our root cause time dropped to 40 minutes. We required exactly one engineer on the incident. We achieved full visibility into the context flow using the Neo4j Browser. Prompt version tracking was natively stored in the Decision nodes and instantly queryable. Our confidence in the fix was absolute because our regression test was a mathematical graph assertion.

Calculate this right now. How much does 90 hours of senior engineering time cost your organization. That number is the hidden tax of relying on flat observability for multi agent systems.

The economic impact is staggering. Under the old system we spent $18,000 in raw engineering payroll to debug a $240,000 direct incident cost while suffering unknown downstream damages to our client trust. Under the new system we spent exactly $100 in engineering time and the graph assertions caught the next incident in staging before it ever reached production.

The architectural shift is clear. We stopped adding more flat logging hoping to manually reconstruct causality. We started modeling decisions as graphs and querying the causality directly.

Observability for agents is not about collecting more flat data. It is about modeling the correct physical structure of the workflow. Flat logs scale linearly with agent complexity making debugging exponentially harder. Graph evaluations scale sub linearly because Cypher queries get easier as you add more structural assertions.

What you should do?

Engineering leaders must take concrete steps to fix this observability gap immediately.

Step 1

You must start modeling your agent traces as graphs today. Even if you are not running Neo4j in production yet you must start thinking in nodes and edges. Stop logging that an agent placed an order. Start logging that an Agent Run made a Decision using specific Context which triggered a specific Tool Call.

Step 2

You must instrument your context propagation. Every single time an agent passes context to another agent you must log exactly what context was passed and where it came from and when it was retrieved and whether it is marked as stale.

Step 3

You need to set up a graph database. If you are not ready for production infrastructure you can download Neo4j Desktop locally. Ingest a few traces manually. Write your first Cypher query and visualize the decision graph. Once you understand the immense value you can deploy Neo4j Aura on their cloud managed tier and stream decisions in real time from your agent framework.

Step 4

You must write your first graph evaluation. Pick one critical failure mode you actually care about. Maybe it is stale context leading to bad decisions. Maybe it is a high value action triggered without human verification. Write that failure mode as a Cypher query and run it after every single agent execution. If the query returns a risk count greater than zero you pause the pipeline and investigate.

Step 5

You must build a decision graph dashboard. Use Neo4j Browser to visualize which agents are making the most flagged decisions and which context sources are most frequently stale.

Make your causality visible and make it queryable. Stop debugging multi agent systems with grep and start querying the decision graph.

What’s next?

We evaluate this architecture and see exactly where the industry is heading.

Now

We are doing post hoc graph evaluations. We build traces as graphs and query them with Cypher to catch errors before the next run.

Near future

We will use real time evaluation hooks. We will stream decisions into Neo4j as they happen and flag anomalies mid run using live graph assertions. Imagine Agent 5 is about to place a massive order. Before executing the final API call the system runs a Cypher query checking for stale context paths. If the query detects a violation the agent pauses automatically and surfaces the decision graph to a human for manual approval.

Ultimate goal

The ultimate goal is autonomous self correction. Agents will continuously query their own decision graphs to detect context drift in real time. If they detect a structural anomaly they will reroute their logic or pause execution entirely without human intervention.

Graph evaluations are not a nice to have feature. They are the fundamental difference between debugging in minutes versus debugging in days. That operational gap only grows as you add more agents to your cluster.

Today we debug agents after they fail. Tomorrow agents will debug themselves before they fail. But you cannot self correct without understanding causality. You cannot query causality without building graphs. Observability is not the endgame here. Autonomous self correction is the endgame.

Conclusion

The $240,000 order was not a failure of the language model. It was not a failure of the prompt engineering. It was not even a failure of the multi agent architecture itself.

It was a catastrophic failure of enterprise observability.

Flat logs can tell you exactly what happened. They can never tell you why it happened. Artificial intelligence agents do not think in linear sequences. They branch and retry and delegate and loop. Their autonomous decisions form a complex graph and never a simple timeline.

If you are deploying agents in a production environment you desperately need observability that matches their actual internal structure. You must model your traces as graphs. You must query causality using Cypher. You must write structural assertions on context propagation instead of just verifying final outputs.

The next time your agent makes a disastrous decision you will not spend 90 hours grepping through flat JSON files. You will run one single query. You will instantly see the exact decision path. You will fix the poisoned context and add a new regression test. You will finish the entire investigation in 40 minutes instead of 3 days.

Agents think in graphs. So should your evaluations.